Cisco 8000v Throughput on Azure

published: 8th of July 2023

Intro

I was recently troubleshooting an issue reported by users as "intermittent slowness". IMO intermittent issues are some of the hardest to troubleshoot. In this post, I will outline the issue and cover the eventual solution.

The Scenario

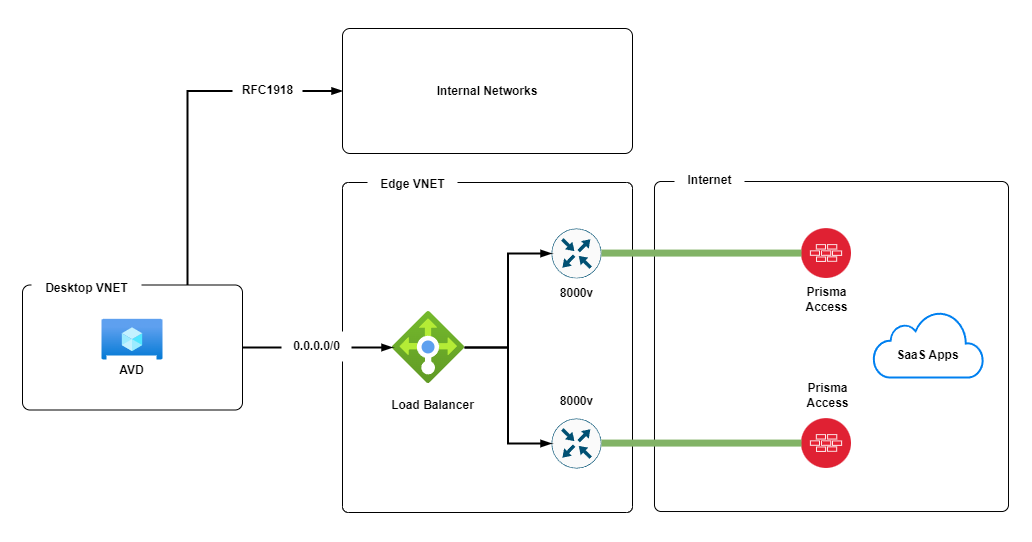

Consider the following scenario.

- Users are assigned Azure Virtual Desktops (AVD)s to which they login to over the interent.

- The AVDs live in a Virtual Network (VNET) that routes internal (RFC1918) traffic on a different path to Internet traffic.

- The Desktop VNET has a Default route (0.0.0.0/0) with a next hop that points to the Azure Load Balancer in the Edge VNET. Internet bound traffic follows this path.

- In the Edge VNET the Azure Load Balancer round-robins traffic to 2x Cisco 8000v (8Kv) routers.

- The 8Kv routers have IPSec tunnels over the internet to Prisma Access endpoints.

- The user applications are mainly SaaS based and therefore heavily rely on the internet based path.

The Problem

The above design pattern is deployed in multiple regions and was operating without issues for around 18 months. We were only experiencing the issue in a single region.

Given the issue surfaced around the same time each day gave us a clue that the problem was load related. The time frame correlated to when we had the highest user count, and the region with the problem had the most users.

Looking at our monitoring charts, we could see that the max bandwidth we achieved was short spikes of around 150Mbps. But never any more that. Once it hit 150Mbps, it then fell off a cliff.

During these spikes, the 8Kv's hit a bottleneck stopped forwarding traffic.

The first suspect was licensing. Are we hitting a licensing limit? Turns out, bandwidth licensing was not an issue as we have a 10G bandwidth licenses.

The next suspect was an IPSEC troughput limit. The only document I could find with reference to IPSEC throughput shows that we should be able to achive 10Gbps of both encrypted and unencrypted throughput based on our license.

On to Microsoft, who confirmed that with the type of VM deployed (DS4_v2) and the provisioned CPU (8) and RAM (28Gib) for the 8Kvs we could achieve a max of 6Gbps.

We also had Microsoft validate there were no known issues with the Azure infrastructure.

Additionally, Microsoft verified that the Azure Load Balancer, is not really a hop in the path. the Azure platform, just passes the traffic directly to the backend nodes.

In parrallel, Palo Alto TAC confirmed there where no issues with the Prisma nodes or infrastructure.

So back we went to our old pals Cisco for another loop around the vendor support circle of hell.

We ended up having numerous support calls with Microsoft, Cisco and Palo Alto TAC engineers which went something like this:

The Solution

During the time of the issue we could see spikes in ping response time to the 8Ks of up to 1 Second. Even pinging a self-ip from the 8ks had very high response times.

Cisco TAC performed a packet trace and confirmed that there was indeed in some instances a high amount of latency between when a packet enters and exits an interface.

We had noted that Accelerated Networking was not enabled on the 8Kv VMs and Microsoft suggested that we should enable it.

The fix, it turns out WAS to enable accelerated networking on the Cisco 8000v VMs. This allows the VM to bypass the hypervisors virtual switch and directly access the hosts network interface hardware, allowing for higher levels of performance as well as reduced latency and jitter.

Once we enabled accelerated networking, we found that the throughput to the internet was around 1.5Gbps which is about the max you can expect through an IPSEC tunnel.

Outro

Based on my experience working on this issue. When using Cisco 8000v's on Microsoft Azure, they can achieve around 150Mbps throughput of IPSec without Accelerated Networking enabled. With Accelerated Networking enabled, they can achieve at least 1.5Gbps throughput of IPSec traffic. They may even achieve higher performance on unencrypted traffic. The main lesson learned from this issue: Enable Accelerated Networking in Azure for VMs that have a high network bandwidth requirement.